The MIT School of Engineering’s mission is to educate the next generation of engineering leaders, to create new knowledge, and to serve society.

Tackling cancer at the nanoscale

When Paula Hammond first arrived on MIT’s campus as a first-year student in the early 1980s, she wasn’t sure if she belonged. In fact, a ...

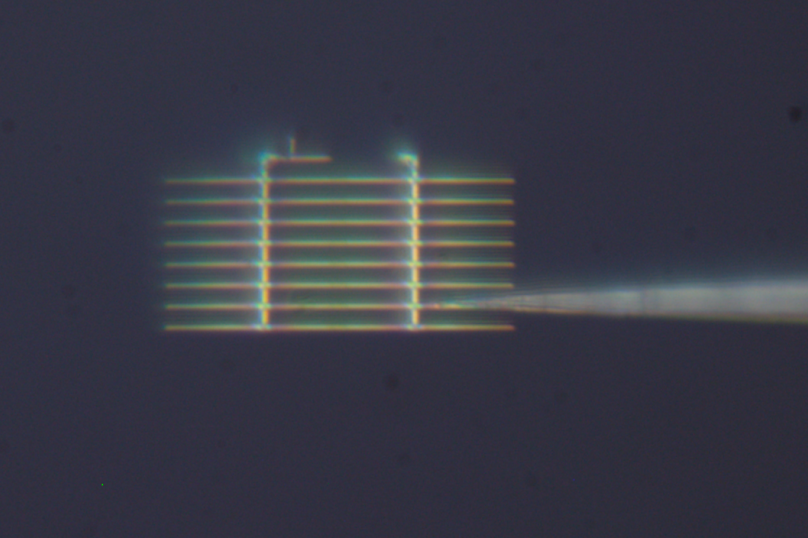

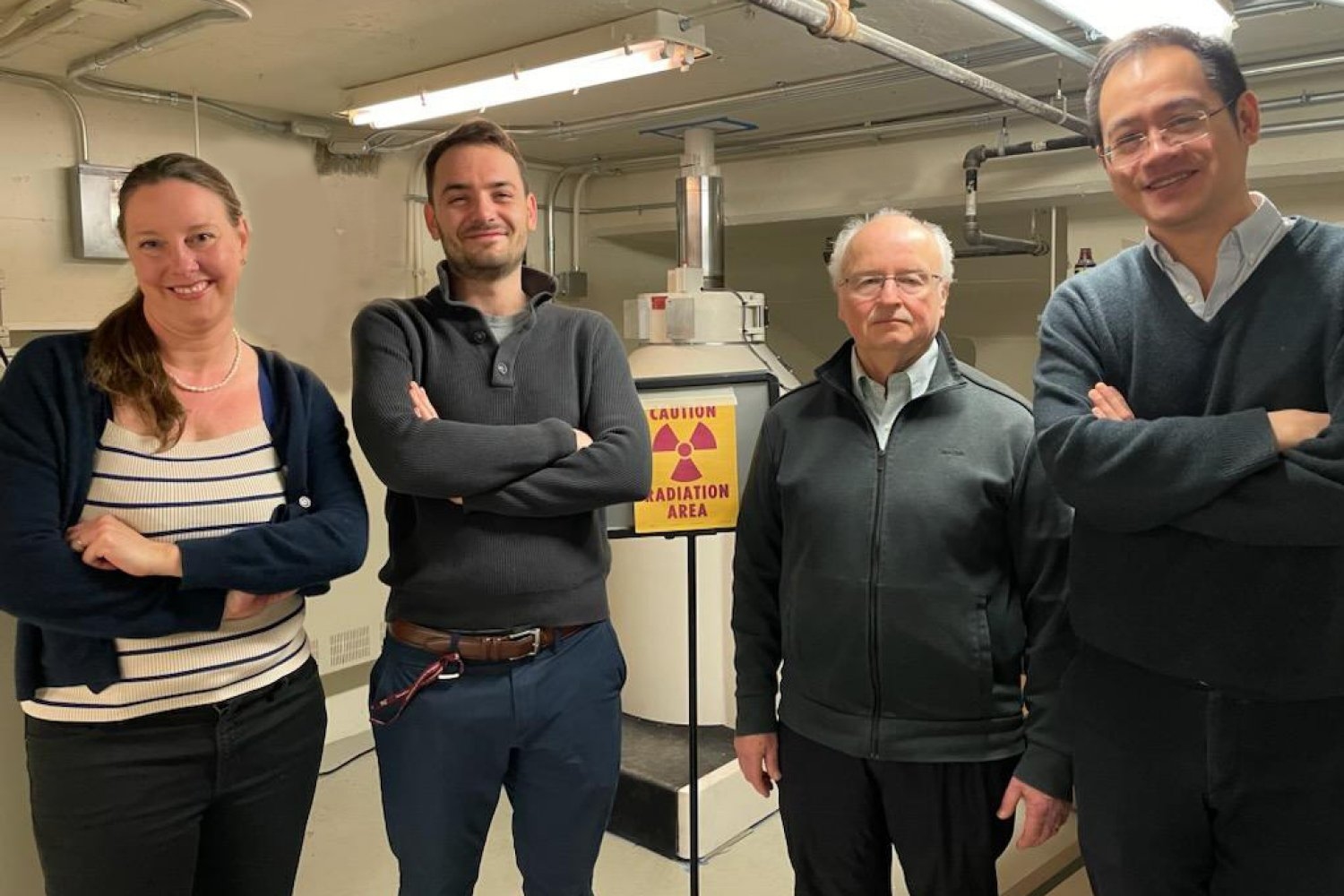

A new way to detect radiation involving cheap ceramics

The radiation detectors used today for applications like inspecting cargo ships for smuggled nuclear materials are expensive and cannot ...

- To build a better AI helper, start by modeling the irrational behavior of humans

- Advancing technology for aquaculture

- New major crosses disciplines to address climate change

- New flight procedures to reduce noise from aircraft departing and arriving at Boston Logan Airport

- Four MIT faculty named 2023 AAAS Fellows

Video Spotlight

Thriving Stars at MIT EECS

The Thriving Stars program in MIT’s Department of Electrical Engineering and Computer Science is on a mission to improve gender representation in electrical engineering and computer science.